Artificial intelligence has rapidly moved from experimental tooling to an everyday part of the developer toolkit. Within just a few years, large language models (LLMs) have evolved from simple autocomplete systems into collaborative development partners capable of navigating entire codebases, proposing architectural decisions, and generating complete test suites.

Across the industry, adoption is accelerating quickly. Surveys suggest that roughly 85% of developers now use AI tools in some part of their daily workflow (Stack Overflow Developer Survey, 2025; JetBrains Developer Ecosystem Report, 2025), whether for generating code, understanding unfamiliar systems, or reviewing complex changes. What started as an experimental productivity boost has quickly become a structural shift in how engineering teams operate.

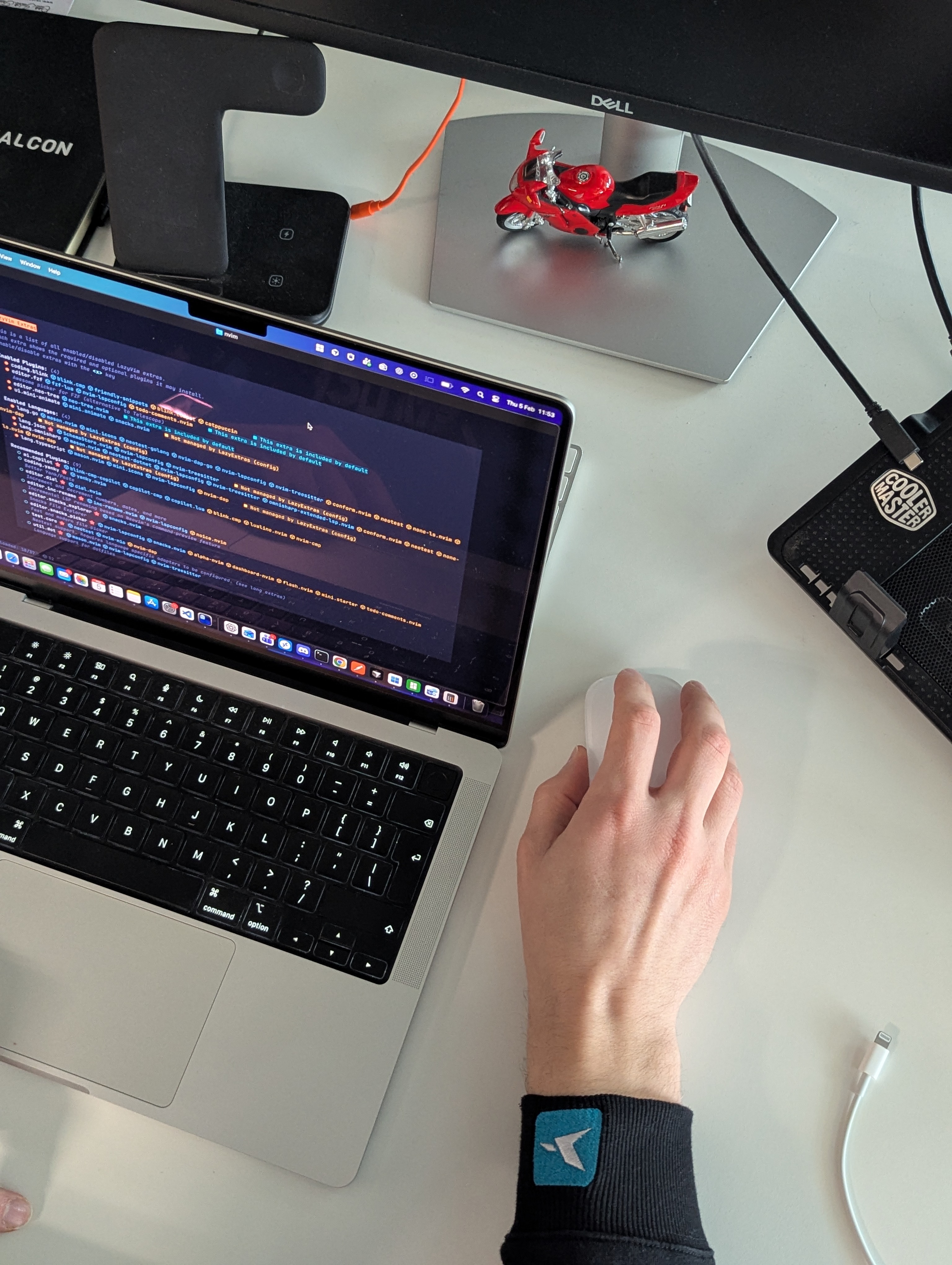

At Falcon, we began integrating LLM-based tools into real development workflows across multiple projects with different architectures and levels of maturity. The results have been both encouraging and instructive.

In some cases the productivity gains were immediate.

In others, the tools struggled or required significant guidance.

Most importantly, they revealed something deeper: AI tools are powerful, but they amplify the strengths and weaknesses of the developer using them.